NECRO MMO

project DESCRIPTION

NECRO MMO is my main on-going project. It’s a C++ powered, high performant suite for building a MMORPG, born out of a fascination with the massive technical hurdles Blizzard overcame to build World of Warcraft.

The suite is divided into four independently scalable components, sharing common protocols and data models:

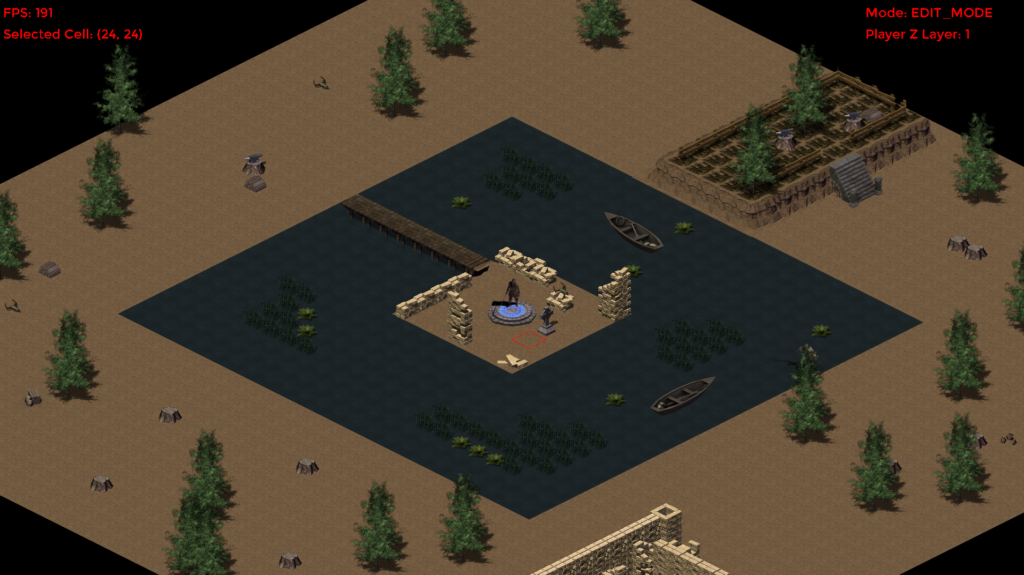

- NECROClient: [Functional] a data-driven C++ game engine that renders the world, captures player input, processes client-side prediction, and manages entity synchronization with the server.

- NECROAuth: [Functional] a highly performant authentication server, leveraging Boost.Asio for scalable I/O and MySQL as the database, it’s responsible for logging in the players, handling the TLS handshake, generate session tokens and to be gateway to game servers.

- NECROWorld: [In Progress] the world game server, that will be the the authoritative world state. Will be designed to handle instance/zone management, AI, scripting, combat resolution, and persistent state management via MySQL.

- NECROHammer: [Functional] a Boost.Asio application and a QA component that generates synthetic clients and scripted traffic to benchmark server performance, stress-test the custom TCP library, and validate both servers (Auth & World) load capacity.

My goal with NECRO MMO is to build a complete functional, open-source highly scalable MMORPG backend by working at low level in order to deeply dive on engineering these sort of systems. I’m writing the core in modern C++, Boost.Asio for handling I/O and I’m architecting around primitives such as sockets, memory management, custom protocol design and a custom game engine.

Components

NECRO Client:

For the game engine, I’ve organized the list of components as layers of the stack. You can click on each layer and be redirected on the respective GitHub folder or file:

- Online: responsible for networking and communication via TCP sockets (AES-GCM encrypted or under TLS) with the servers.

- Game: game related logic that runs inside of the engine, including world loading, entity simulation and object model.

- Animation: sprite animation system that reads from loaded tilesets.

- Entity: base unit of an object that exists inside of a Cell in the world.

- Physics: basic 2D physics for Isometric based games.

- World Definition: partitioning of the game world and orthographic camera frustum culling data.

- Debug Console: debugging tool to issue cmds and receive their output while the engine is running.

- Input System: processing of mouse & keyboards at a standard level.

- Low-Level GPU Rendering: camera, render targets, debug drawing and lights.

- Resources Manager: prefabs, tilesets, maps and images.

- Core System: engine start-up, initialization of sub-systems and shutdown.

- SDL2 & STL: as the cross-hardware interface.

The engine is designed to run even in critical conditions such as with missing or corrupted data such as assets, prefabs or animations. The next step once the World Server is up and running will be Entity Replication for AI and Players.

NECRO Auth:

Is a highly performance-focused authentication server, leveraging Boost.Asio for scalable I/O and MySQL for the database. It’s responsible for handling the communication with clients under TLS, logging in the players, generating session tokens and to be gateway to game servers.

I’ve organized the list of components as layers of the stack. You can click on each layer and be redirected on the respective GitHub folder or file:

- Auth Session: as the state-driven handler that represents connected peers during the authentication process.

- Socket Manager: as a global manager of NetworkThreads and responsible of accepting and directing connections to NetworkThreads.

- Network Message: a higher-level view on packets, designed specifically for sending and receiving data over a network.

- Packet Manager: stream buffer that packs C++ data types (integers, floats, strings) into a raw byte array.

- TLS: setup to safely communicate login info.

- Network Thread: template-based manager designed to handle a subset of network connections on a dedicated thread using Boost.Asio and optionally OpenSSL as well.

- TCP Library: provides both a modern asynchronous implementation (via Boost.Asio) and a lower-level, cross-platform wrapper (via Berkeley Sockets/WinSock).

- Core System: server start-up, initialization of sub-systems and shutdown.

- Boost.Asio & STL: as the application’s foundation.

The Auth Server includes idle timeout checks, TLS handshake timeouts and IP based spam prevention.

Flow of Authentication:

NecroAuth gets setup for listening for incoming connections. The game client connects to the NECROAuth server, initializing the connection. Once the AuthServer receives the request, SSLAsyncAcceptCallback creates an AuthSession and queues the new connection in the least busy NetworkThread, from there, the NetworkThread itself will manage the connection.

The NetworkThreads run every millisecond, and constantly update the sockets they manage. AuthSession Update() contains the state-machine that drives the exchange. For a socket that has just connected and has just been queued in a NetworkThread, TLS is setup, handshake is attempted and in case of success, the AuthSession state machine is updated and the NetworkThread, on the next update, will start the AsyncRead. If handshake fails or it takes too much time to complete, the clients get timed-out.

On the other side the Client was holding the other side of the handshake, which if ends up being successful will let the Client send the greet packet to the server. The Greet Packet (with ID = LOGIN_GATHER_INFO) includes a header (PacketID, AuthResult, Size) along with the payload being the username and client version.

The AuthServer is setup with a Handler map, which associates PacketIDs to a AuthSession status, a PacketSize and a Callback. So that when a packet arrives, the Server can quickly search the map via PacketID and retrieve the relevant information.

The AuthServer will receive the GreetPacket, triggering the AsyncReadCallback. It will then identify it, ensure its size, and then process it via the Handler’s Callback. For the greet packet we’re currently considering, the server will call HandleAuthLoginGatherInfoPacket. This method checks the sanity of the packet and then parses the packet’s data into the AuthSession’s data. Once that is done, the Server needs to check on the database if the login (or username) exists, so it queues up a request to the DBWorker. The DBRequest also includes the Callback to execute once the request is completed and the query result is returned, in this case, it will be DBCallback_AuthLoginGatherInfoPacket.

The DBWorker, which was running in its own thread and executing his ThreadRoutine, will pickup the request, execute the SQLStatement and push the result on the ResponseQueue (the request we’re considering is not marked as “FireAndForget”, so the result of the query is saved in the ResponseQueue).

Meanwhile, the main thread is running several handlers, including the DBCallbackCheckHandler. This allows us to safely retrieve the response queue, and dispatch (or post) the callbacks to be executed on the NetworkThread’s io_context associated with the AuthSocket that created the DBRequest.

So finally DBCallback_AuthLoginGatherInfoPacket is executed, and we’re back in the AuthSession execution logic. It is time to reply to the client. We fetch the SQL result and in case the username exists, we continue with the authentication, otherwise we send an “authentication failed” packet to the client.

The Client reads the packets in the same fashion (using Handlers), so once a packet arrives the ReadCallback is executed, packet is identified, size is ensured and the handler’s Callback is executed, which in this case is HandlePacketAuthLoginGatherInfoResponse. Here we check for the AuthResult, which if successful sets up the next step of the authentication.

Next up the client needs to send the account’s password in order to continue with the authentication process, but that is not all. The goal here for successful authentication is to also setup the communication that will happen between the Client and the World Server. Since we’re not going to use TLS for the World Server, it’s a good idea to still encrpyt the game packets. We do that via AES-GCM 128bit encryption. This means that it’s crucial to properly setup the Session Key (shared secret) and the Initialization Vector (IV) prefix on both sides of the communication.

The Session Key is generated by the Auth Server, saved to the Database (so the World Server can retrieve it) and sent to the Client (while still being under TLS). Client’s IV is generated by the Client and sent to the Auth Server, and Server’s IV prefix (which needs to be different from Client’s IV) is currently generated by the AuthServer to ensure the fundamental rules of AES-GCM are not broken, although is not currently used as the World Server is not developed yet (Feb 2026).

So in HandlePacketAuthLoginGatherInfoResponse, the client prepares the new packet to proceed with the authentication. So it generates its prefix IV and append it to the packet, append the password as well (currently in cleartext, but it’s just temporary) and send the packet to the AuthServer.

The AuthServer will handle this packet via HandleAuthLoginProofPacket, which asks the database for the user’s password and then handles the DBCallback. Passwords are (currently) naively checked and in case of a match we continue to the final part of the authentication. The AuthServer makes sure to generate a random IV prefix that is different from the client’s, then it generates the Session Key, and also generates a one-time use GreetCode that the Client will use to the greet the WorldServer.

All of that is saved in the Database, so that the Server will be able to retrieve the SessionKey (using the GreetCode) and the AuthServer IV prefix. Then, we send the packet containing the Session Key and GreetCode to the Client (although Server IV prefix is not sent yet).

The Client receives the packet and executes HandlePacketAuthLoginProofResponse, which will complete the authentication by saving the SessionKey and GreetCode in memory. Connection between Client and AuthServer is dropped.

Todo Next, the Client will connect to the World Server. The World Server will receive the first packet as [GREETCODE | ENCRYPTED_PACKET]. Using the GREETCODE the world server can retrieve the SessionKey used to decrypt the packet, along with the Server IV prefix generated by the AuthServer, and start the communication.

NECRO World:

Will be the World Game Server, the authoritative state that will run the game. Contrary to the AuthServer, the World Server does not use TLS, but encrypts and decrypts the packets using AES-GCM 128bit to minimize overhead.

As of February 2026, only the barebones of the world server are laid out:

- Network Message: a higher-level view on packets, designed specifically for sending and receiving data over a network.

- AES-GCM: 128-bit encryption layer.

- Packet Manager: stream buffer that packs C++ data types (integers, floats, strings) into a raw byte array.

- Network Thread: template-based manager designed to handle a subset of network connections on a dedicated thread using Boost.Asio and optionally OpenSSL as well.

- TCP Library: provides both a modern asynchronous implementation (via Boost.Asio) and a lower-level, cross-platform wrapper (via Berkeley Sockets/WinSock).

- Core System: server start-up and shutdown.

- Boost.Asio & STL: as the application’s foundation.

NECRO Hammer:

Has as goal hammering the servers with synthetic clients and scripted traffic to validate throughput and latency under high load. It’s based on Boost.Asio as well and it has proven to be a very useful tool for QA to benchmark and stress-test the servers.

I’ve organized the list of components as layers of the stack. You can click on each layer and be redirected on the respective GitHub folder or file:

- Hammer Socket: is the synthetic client that can connect and perform requests.

- Socket Manager: as a global manager of NetworkThreads and responsible of distributing clients to the NetworkThreads.

- Network Message: a higher-level view on packets, designed specifically for sending and receiving data over a network.

- Packet Manager: stream buffer that packs C++ data types (integers, floats, strings) into a raw byte array.

- Optional TLS: setup to hammer both the Auth and World Server.

- Network Thread: template-based manager designed to handle a subset of network connections on a dedicated thread using Boost.Asio and optionally OpenSSL as well.

- TCP Library: provides both a modern asynchronous implementation (via Boost.Asio) and a lower-level, cross-platform wrapper (via Berkeley Sockets/WinSock).

- Core System: client start-up, initialization and shutdown.

- Boost.Asio & STL: as the application’s foundation.